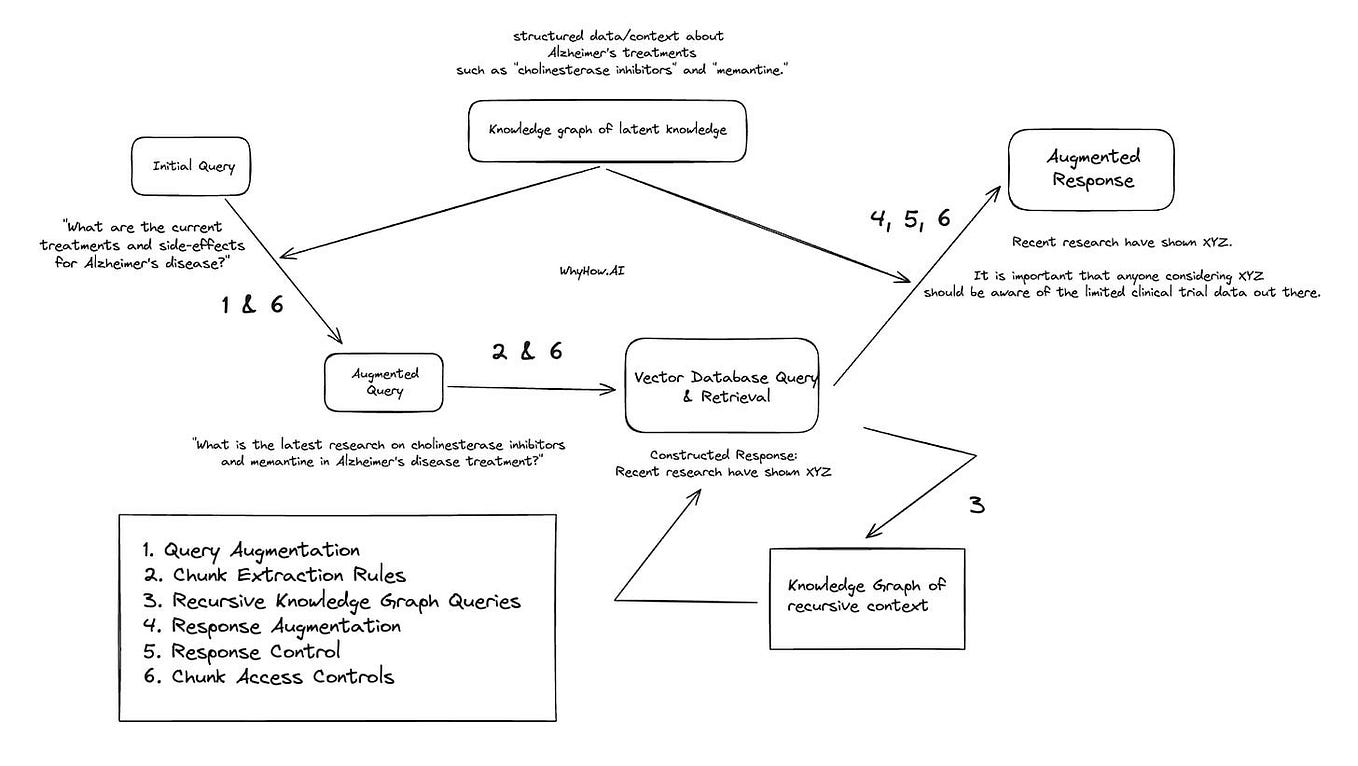

An introduction to RAG and simple/ complex RAG, by Chia Jeng Yang, WhyHow.AI

This article will discuss one of the most applicable uses of Language Learning Models (LLMs) in enterprise use-case, Retrieval Augmented Generation (“RAG”). RAG is the biggest business use-case of…

An introduction to RAG and simple/ complex RAG, by Chia Jeng Yang, WhyHow. AI

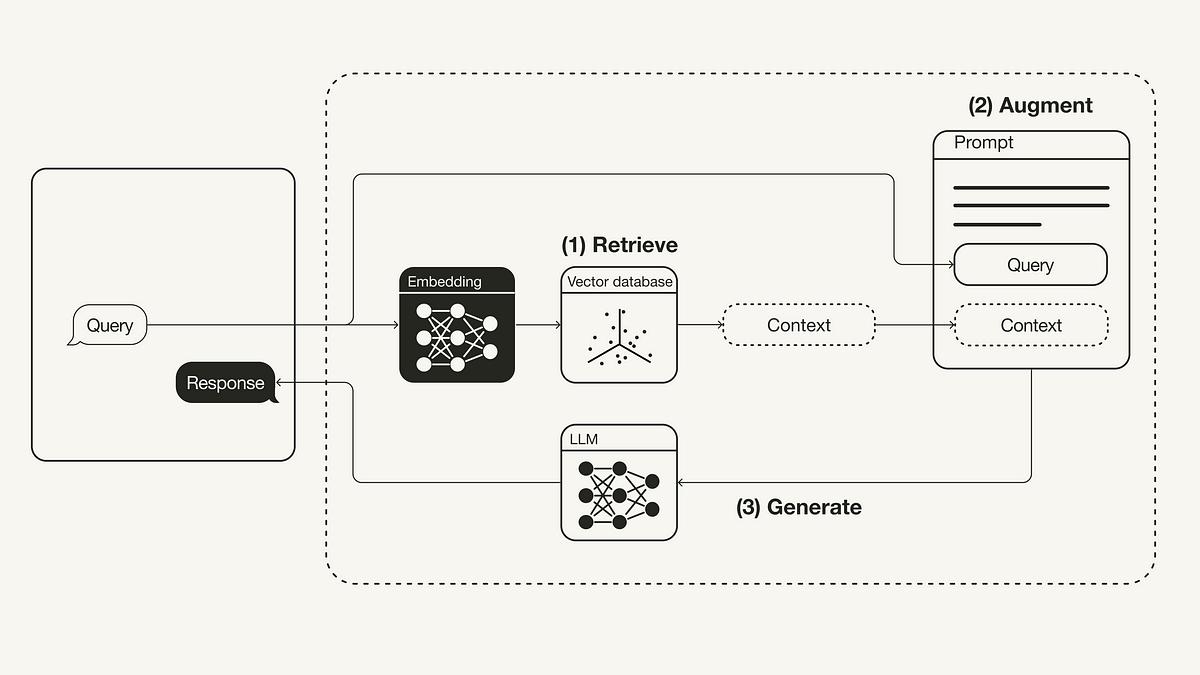

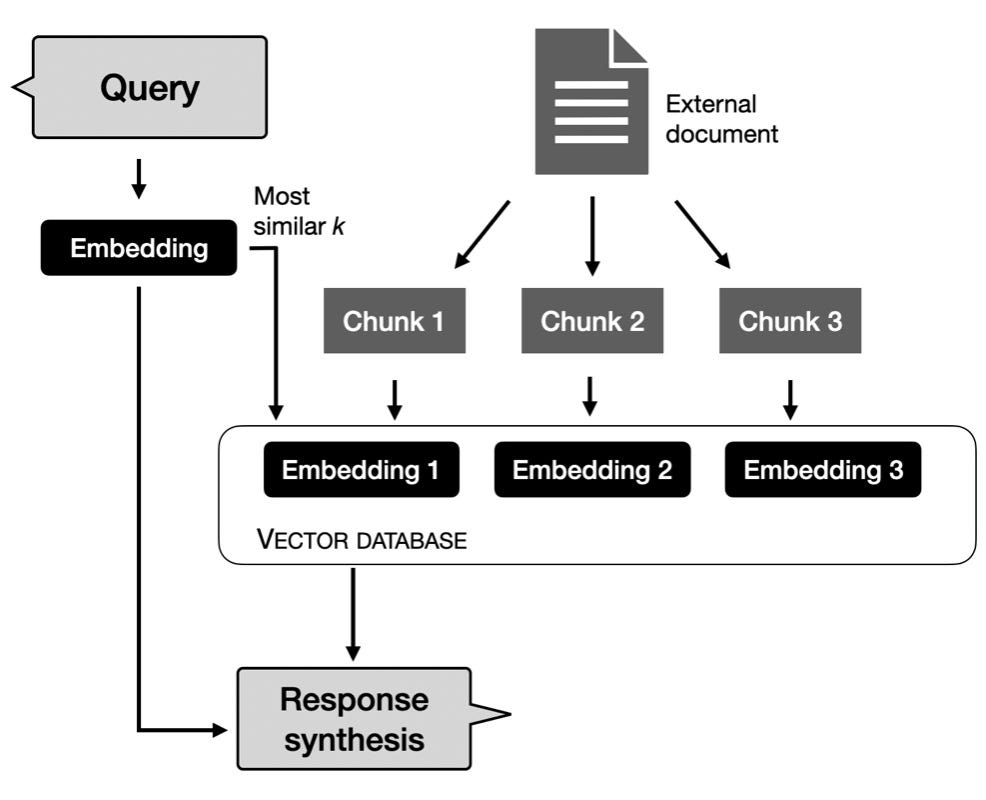

Retrieval-Augmented Generation (RAG): From Theory to LangChain Implementation, by Leonie Monigatti

Unlocking the Black Box: How Chain-of-Note Brings Transparency to Retrieval-Augmented Models (RAG), by Raphael Mansuy

Retrieval Augmented Generation (RAG) Inference Engines with LangChain on CPUs, by Eduardo Alvarez

Are You Pre-training your RAG Models on Your Raw Text?, by Anshu, ThirdAI Blog

List: RAG - Q&A, Curated by Alexperezreina

Chunking Strategies Optimization for Retrieval Augmented Generation (RAG) in the Context of Generative AI, by thallyscostalat

Retrieval Augmented Generation (RAG) Inference Engines with LangChain on CPUs, by Eduardo Alvarez

RAG vs. Fine-tuning: Here's the Detailed Comparison, by Amit Yadav

Modular RAG and RAG Flow: Part II, by Yunfan Gao, Jan, 2024

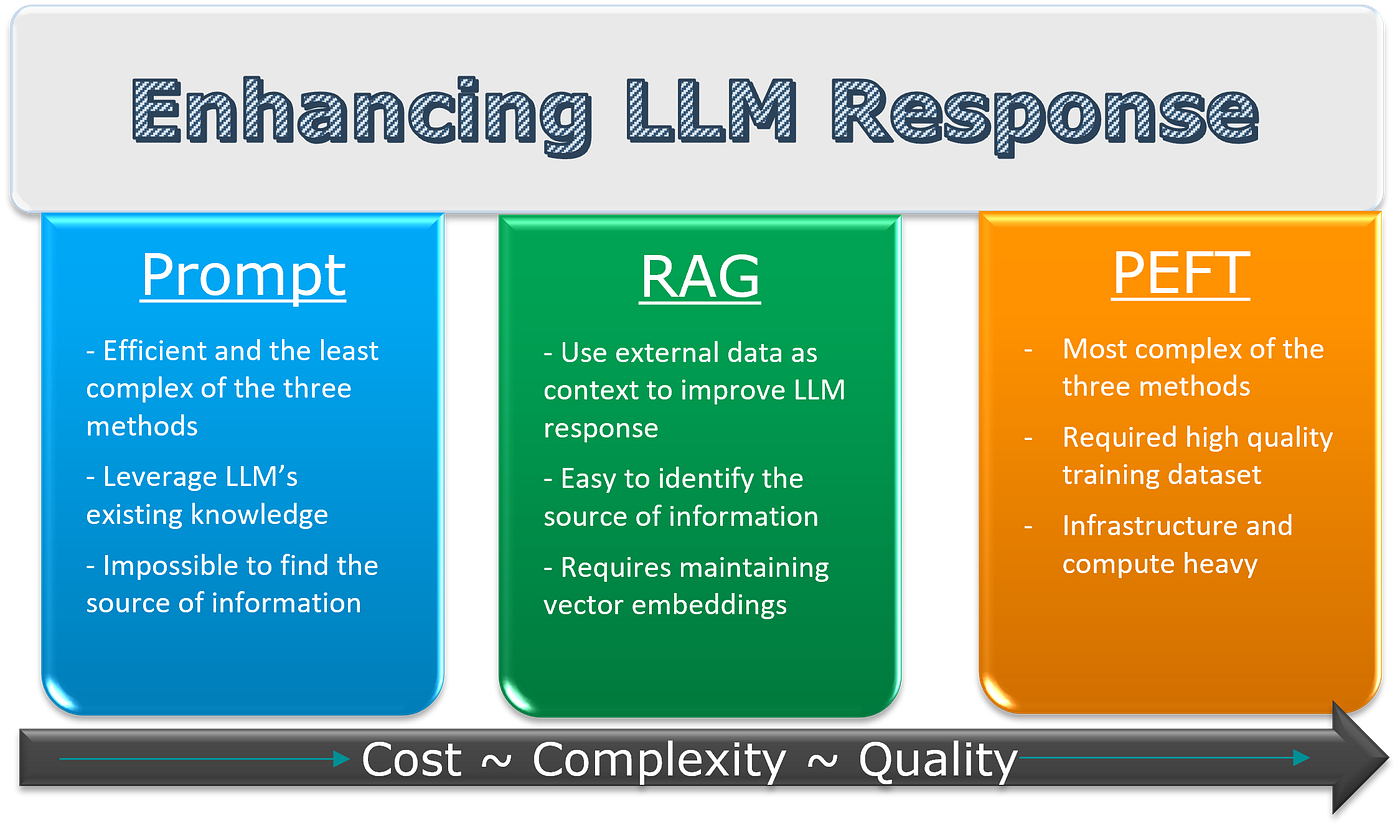

Optimizing 🚀 Large Language Models🤖: Strategies Including Prompts, RAG, and Parameter Efficient Fine-Tuning, by Vasanth S

Structured PDF for the RAG Era, i.e. Chat-with-PDF Applications, by Joey Yang-Li